The Information Disorder In Us

Beauty algorithms that mash fantasy with reality are a pervasive — and damaging — form of misinformation

In 1999 there was RateMyFace. The site allowed users to rate the attractiveness of photos submitted by others. In 2000, the idea took off with Hot or Not, which inspired social media sites like YouTube. Most famously, Mark Zuckerberg launched his spinoff and Facebook precursor, Facemash, for Harvard students in 2003. In 2007, BecauseImHot made the concept more toxic by deleting anyone with a rating below seven from the site. This all happened before algorithms infiltrated all aspects of our lives.

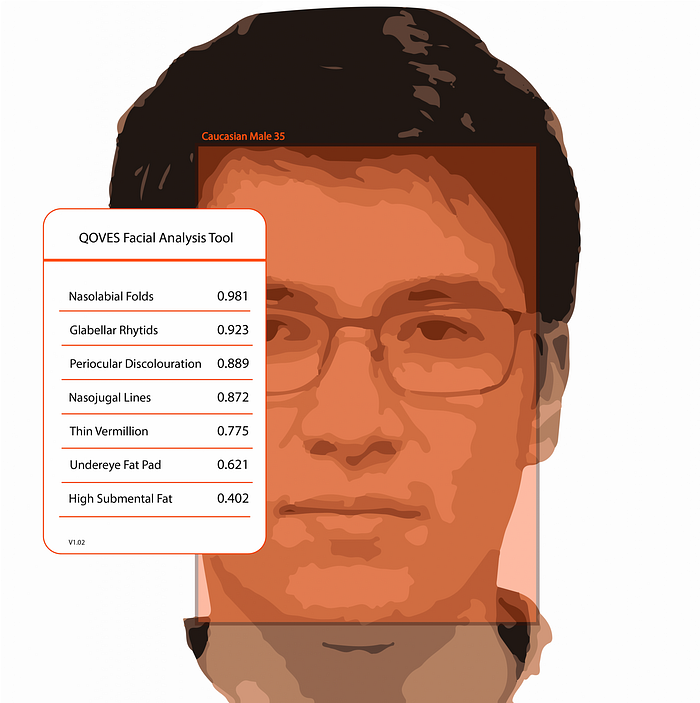

Appearance judgment is among those most engaging features on the most engaging apps. Instagram Explore pages determined which faces and bodies would get the most likes. Snapchat filters morphed features to fit “ideal” beauty standards. FaceTune allowed for personalized exploration into what you could look like. And apps like PrettyScale went old school by assigning users a beauty score, this time with facial analysis tech.

We now have a better sense of the effects. From Instagram Face to Snapchat Dysmorphia to the FaceTune Boom, algorithm-induced anxiety has led millions to seek out plastic surgery or cosmetic services in an attempt to resemble altered versions of themselves or others. Mental health, adolescent confidence, diversity and inclusion, and physical behaviors have all suffered as a result — especially among young girls.

MIT notes that young girls have become subjects in an experiment that shows how technology changes identity formation, how we represent ourselves and relate to others. The Guardian reported algorithms reflect Eurocentric beauty biases, causing even more potential damage for girls of color. Even those who opt out of using filters will have trouble avoiding content fueled by them. EU’s AI ethics project, SHERPA, points out that dating apps use beauty algorithms to match people they think are equally attractive. At the same time, social media platforms like TikTok have deployed a similar tech to promote content by good-looking people. Imagine the effects of knowing your dating prospects or the success of your online content rely on your level of attractiveness.

With consequences so severe, how far should the tech be allowed to go? At what point do AR filters and “beauty algorithms” go from fun to dangerous?

One way to think about it — the technology behind the image-altering tech is not far off from that of other doctored media like deepfakes. And the outcomes are similar. Both can lead to damaging forms of misinformation. People are being led to see the world, and themselves, in misleading, inaccurate ways.

Like all forms of misinformation, financial incentives promise to fuel tech that affects how we see ourselves. Klaus Schwab, Executive Chairman of the World Economic Forum, suggested where this might lead. “New technologies are fusing the physical, digital and biological worlds, impacting all disciplines, economies and industries, and even challenging ideas about what it means to be human.”

In our studies of information disorder, we’re widening the definition to include media formats that intentionally or unintentionally mislead to cause harm. As more users embrace AI and AR filters, conversations about a different type of disorder — the effects inside of us— cannot be overlooked.

For more updates on navigating information disorder, subscribe to the Media Genius newsletter.

Know a college student or recent grad? Applications for Weber Shandwick’s two Media Genius summer programs are now open. The Fellowship is a paid, six-week immersion in media, culture, and tech. Exchange is a weekly class and community around topics of interest.